Deep Learning has come a long way in the application and deployment of both industry-based applications and commercial uses. It involves deep neural networks that are connected to each other and create a deep network to circulate information.

In fact, Yann LeCun, a renowned computer scientist made a pretty good statement explaining how important Generative Adversarial Networks(GANs) can be for machine learning if we consider the history of deep computing. Since the time they were first announced in 2014 by Ian Goodfellow, GANs became popular in both machine learning and deep learning with the capability of generating various permutations and combinations of data depending on the result expected.

It has become quite a norm that anybody can fool a normal neural network into misclassifying objects even if a small amount of noise is added to the original data. This causes the data to have higher confidence values but for all the wrong reasons.

This phenomenon might lead to overfitting in our machine learning or deep learning model. Even if the mapping between the input and the output is almost linear, the truth is that even the slightest change in a feature of our dataset may complement a big error generation in the data. This is the reason why GANs came into existence in the first place.

Working of GANs

There are two components to any neural network in GANs, which are the generator and the discriminator. The generator has the prime responsibility of generating new instances of data while discriminator supervises if each instance of data belongs to the original dataset or not.

The generator takes some input data points to create some new output which it passes through the discriminator. Generally, in most of the cases of neural networks, we take input as some random digits and generate a new synthetic image of some painting or some famous object. These images may look authentic but in reality, they are made of virtual data points that are available in our training set. This is the primary job of the discriminator. It impersonates the fake image with an original one without being caught in the process.

Steps That GANs Have to Undergo

- The generator takes in random data points present in our dataset. Then generates a random output which can be some image or an object.

- This produced image is fed into the discriminator along with some original input taken from the ground-level dataset.

- This step involves the discriminator taking in both the real as well as the fake inputs. And then generating all the possible outcomes which can be anything between 0 and 1.

- The values with 1 denote the output with more authenticity as 0 denotes artificial output. The data in GAN is fed until it is able to distinguish between the real and the fake values in its execution.

Different Types of GANs

Conditional GAN: Conditional GAN, also known as CGAN is the deep learning technique that consists of some conditional parameters. A new parameter is added to the generator for producing the necessary output. At times, labels are even included in the input to the discriminator so it is able to distinguish the real data from the generated data.

Vanilla GAN: This is the most simple GAN that exists in the family. Both the generator and the discriminator are classified as the multi-layer perceptrons. As the functioning of this GAN is easy, it simply optimizes the mathematical equation by using gradient descent.

Deep convolutional GAN: The DCGAN is the most commonly implemented and popular method of GAN. It is made of ConvNets instead of multi-layer perceptrons. Max pooling is used to implement the ConvNets in which the layers are not completely connected with each other.

Applications of GANs

Animation Characters

It takes a lot of time and money to create new animation characters for long series or movies on a regular basis. A big production house that produces tons of animation content throughout the quarter requires to generate a lot of these new fictional characters for every new show or movie. GAN plays an important role in creating new characters using the old data.

Inspired by this, many scientists have tried to generate Pokemon characters which include the PokeGAN project and the DCGAN project. Both of these had some really interesting outputs.

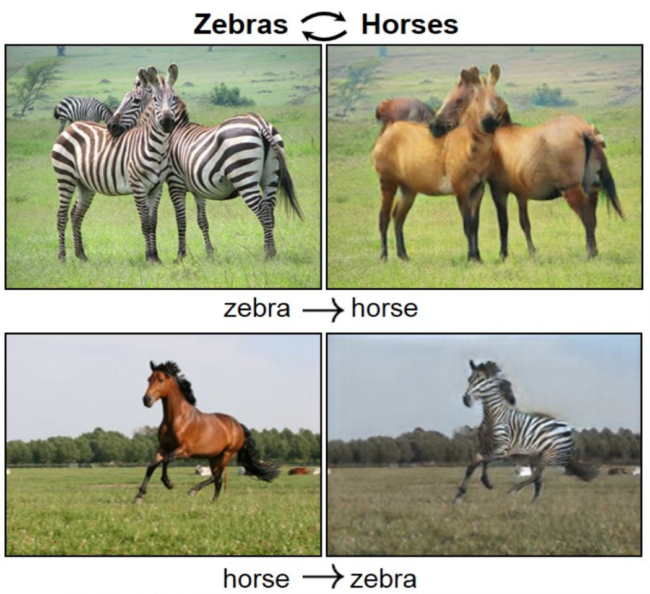

Animals

There are some applications of GAN which are quite common in commercial space. One of them is the generation of one random painting from a scenery image. This is performed using the CycleGAN. On top of this, it can even transform pictures of horses to zebras and vice versa.

CycleGAN formally constructs two networks to build images from one domain to another and then the same in the reverse direction. The discriminator has the task of judging the quality of these generated images as output. There is a function to decide if the image is real or generated.

Human Faces

We have come a long way in terms of experimenting and constructing artificial human faces using the past data and passing it through the GAN. The output looks so realistic that in fact, if a person who has no idea what has happened at the backend won’t be able to say that it is a generated image. Such is the power of GAN.

This output was generated mostly generated on celebrity faces, where the input were some famous faces and the output was a bit different from the original but a bit improved. Do have a look at our article on Deepfake which gives you a more clear picture on this.

While training the generator, keep the discriminator values constant and while training the discriminator, keep the generator values constant. Each component must train with a static adversary. At times, training the discriminator with the MNIST dataset before you train the generator can also establish a clearer vision for gradient descent.

End-Notes

GANs are very fussy and can take a lot of time and resources to get trained. It requires multiple GPUs to train itself. Also, if the CPU is not capable enough, then it can take days to train the model. There is a possibility that the main components of GAN may overpower each other. For example, if the discriminator is over-fitted, then it may return values close to 0 and 1. Hence, this will affect the generator and it won’t be able to judge the gradient.