Kerberos is a way of authenticating users that was developed at MIT and has grown to become the most widely used authentication approach. Hadoop requires kerberos to be secure because in the default authentication Hadoop and all machines in the cluster believe every user credentials presented. To overcome this vulnerability kerberos provides a way of verifying the identity of users. Kerberos identity verification is implemented through a client/server model.

There are several terminologies that are used when implementing kerberos identity verification. An identity that needs to be verified is referred to as a principal. Principals are divided into two categories vit user principals and service principals. User principal names (UPN) are used to refer to users, these users are similar to users in an operating system. Service principal names (SPN) refer to services accessed by a user such as a database. A realm in kerberos refers to an authentication administrative domain. Principals are assigned to specific realms in order to demarcate boundaries and simplify administration.

Information on principals and realms resides in a key distribution center (KDC). Therefore it is very important to put in place physical and network security measures to protect KDC because if it is compromised the entire realm is compromised. The kerberos database, authentication (AS) and ticket granting service (TGS) form the KDC. The kerberos database is the repository of all principals and realms. The AS is used to grant tickets when clients make a request to the AS. The TGS validates tickets and issues service tickets

In this tutorial setting up a KDC and client will be demonstrated at a very basic level. Kerberos is a very wide topic and the reader is advised to refer to its documentation for an exhaustive discussion.

To implement kerberos authentication in Hadoop several steps are required and they are listed below.

- The first step is to create a key distribution center (KDC) for the Hadoop cluster. It is advisable to use a KDC that is separate from any other existing KDC.

- The second step is to create service principals for each of the Hadoop services for example mapreduce, yarn and hdfs.

- The third step is to create encrypted kerberos keys (keytabs) for each service principal

- The fourth step is to distribute keytabs for service principals to each of the cluster nodes.

- The last step is configuring all services to rely on kerberos authentication.

Before we can implement authentication let us run some commands on Hadoop. After successfully implementing kerberos it will not be possible to run commands without authentication.

hadoop fs -mkdir /usr/local/kerberos

![]()

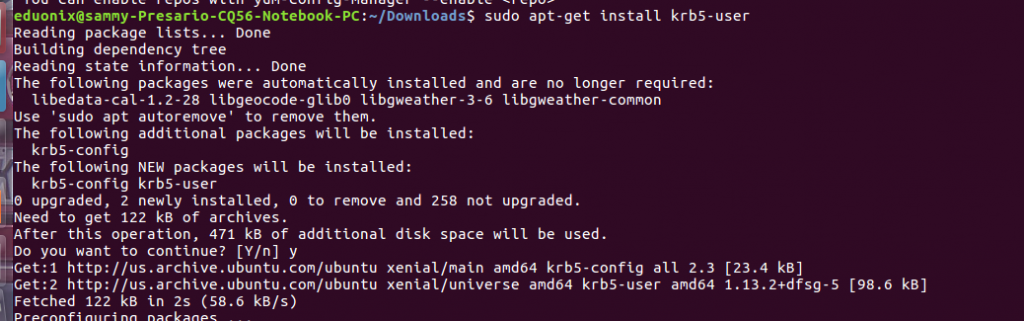

The kerberos server must be installed on a server with a fully qualified domain name (FQDN) because the domain name is used as the realm name. In this tutorial we have used a realm name of EDUONIX.COM so substitute this with FQDN that points to your server.Before we can begin implementing kerberos authentication we need to install client and server. In a cluster you designate one node to act as KDC server and the other nodes are clients from where you can request tickets. The command below installs the client. The client will be used to request for tickets from KDC.

sudo apt install krb5-user libpam-krb5 libpam-ccreds auth-client-config

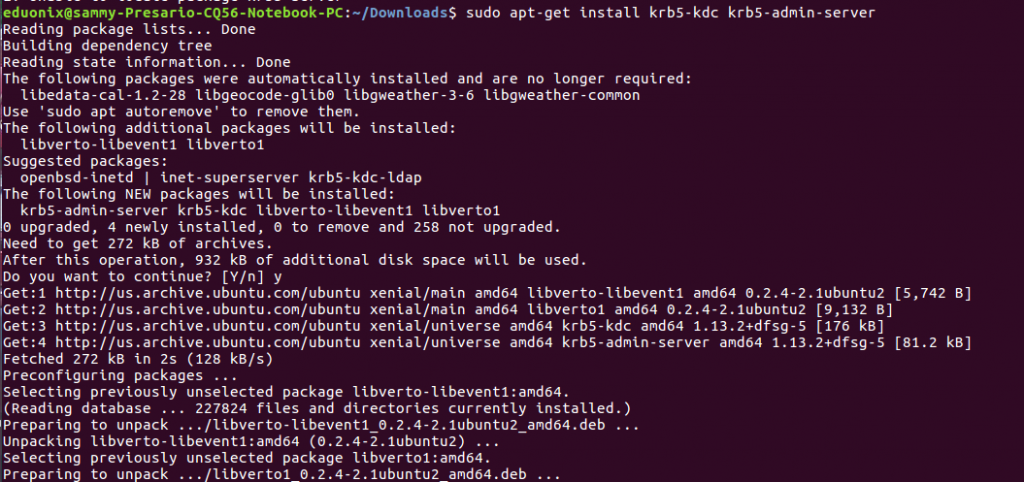

To install the server and KDC use this command sudo apt-get install krb5-kdc krb5-admin-server

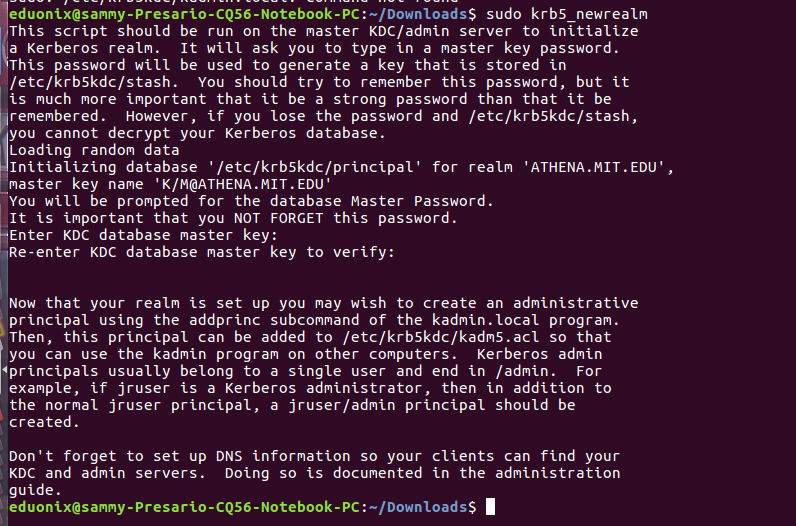

Run this command sudo krb5_newrealm to initialize a new realm on the machine that will act as KDC server. Enter a password when prompted for one.

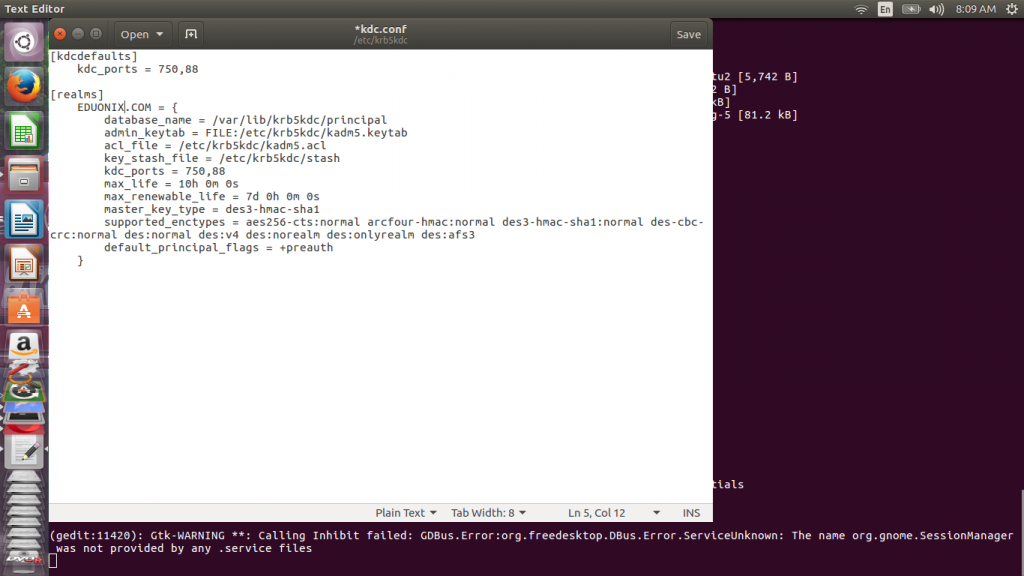

Once installation is complete we edit kdc.conf file which resides in /etc/krb5kdc/ directory to set proper configuration settings. Settings of interest are where KDC data files will be located, period tickets remain valid and the realm name. The other configuration options require a deep understanding and should only be changed when you know their effects. Change the realm name to EDUONIX.COM.

Run this command to open kdc.conf file sudo gedit /etc/krb5kdc/kdc.conf

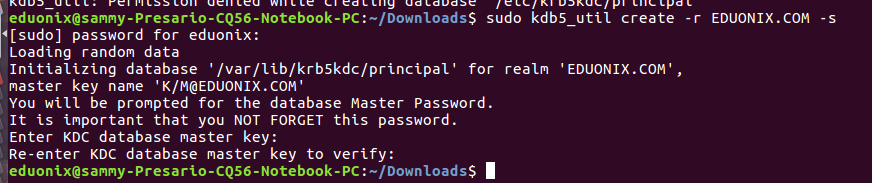

We begin by creating a KDC database for our installation. The kdb5_util command creates a database and a stash file to store the master key to our database. The master key is used to encrypt the database to improve security. Run this command kdb5_util create -r EDUONIX.COM -s to create the database and use a master key of your choice. In this tutorial we use @Eduonix as our master key.

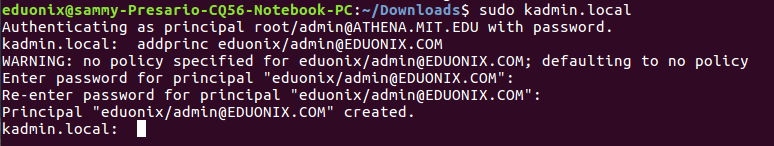

After the database has been created we edit acl file to include the kerberos principal of administrators. This file identifies principals with administrative rights on the kerberos database. The location of this file is set in kdc.conf file. Open acl file by running sudo gedit /etc/krb5kdc/kadm5.acl and add this line */[email protected] *. This grants all privileges to users who belong to admin principal instance. The kadmin.local utility is used to add users who are administrators to the kerberos database. Run sudo kadmin.local at the command line and when prompted enter this command addprinc eduonix/[email protected]. This will add the eduonix user as an administrator of kerberos database.

Start kerberos services by running the commands below

service krb5kdc start service kadmin start

if you would like to reconfigure kerberos afresh to change the realm name and other settings use this command sudo dpkg-reconfigure krb5-kdc.

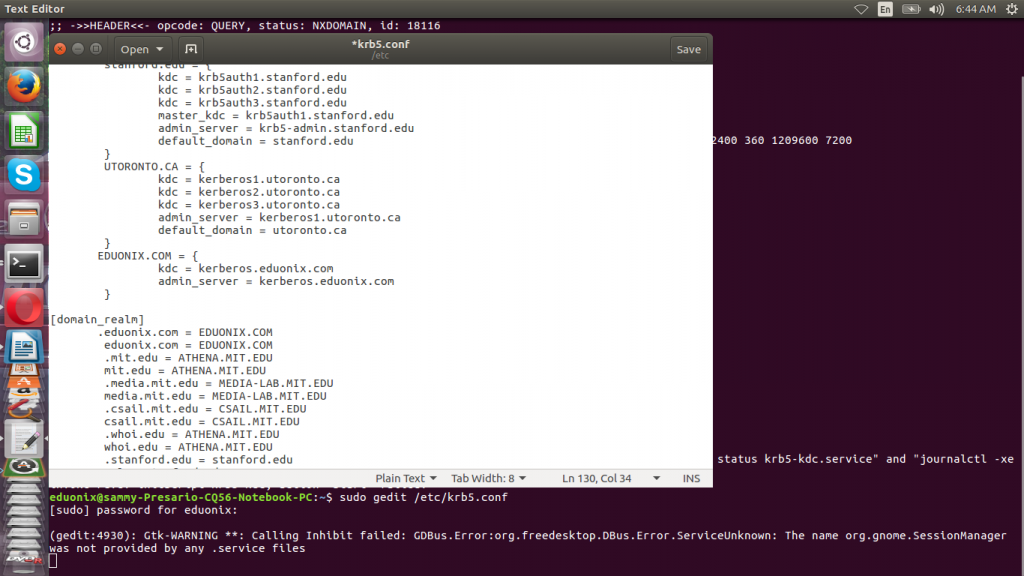

The kerberos client configuration settings are store in this directory /etc/krb5.conf. We edit this file to point the client to the correct KDC. Change the default realm to EDUONIX.COM. Add the realm by including the lines below in realms

sudo gedit /etc/krb5.conf

EDUONIX.COM = {

kdc = kerberos.eduonix.com

admin_server = kerberos.eduonix.com

}

Add the lines below under domain_realm

.eduonix.com = EDUONIX.COM eduonix.com = EDUONIX.COM

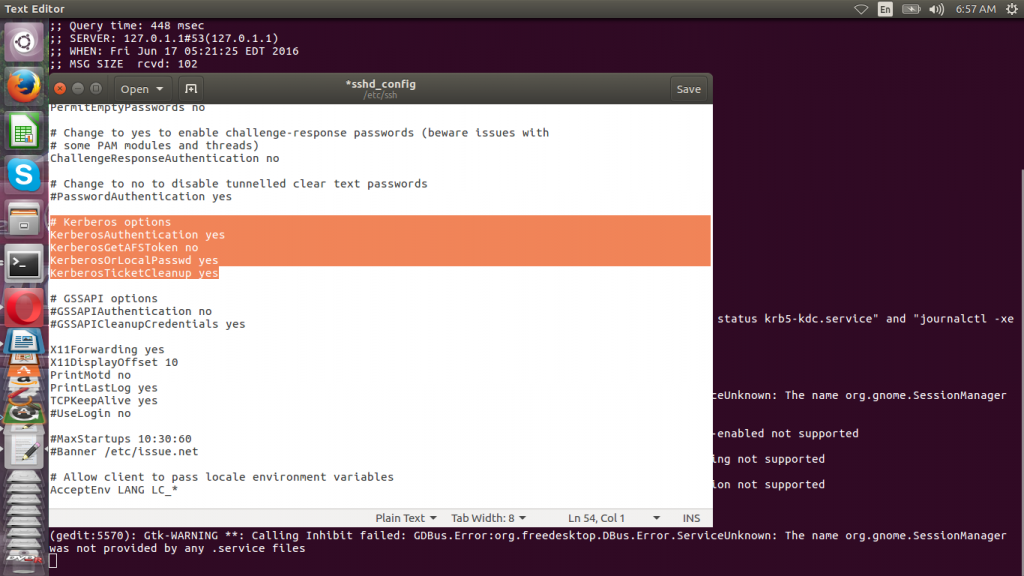

With kerberos authentication setup we need to edit SSH configuration to allow kerberos authentication to be used.

sudo gedit /etc/ssh/sshd_config

# Kerberos options KerberosAuthentication yes KerberosGetAFSToken no KerberosOrLocalPasswd yes KerberosTicketCleanup

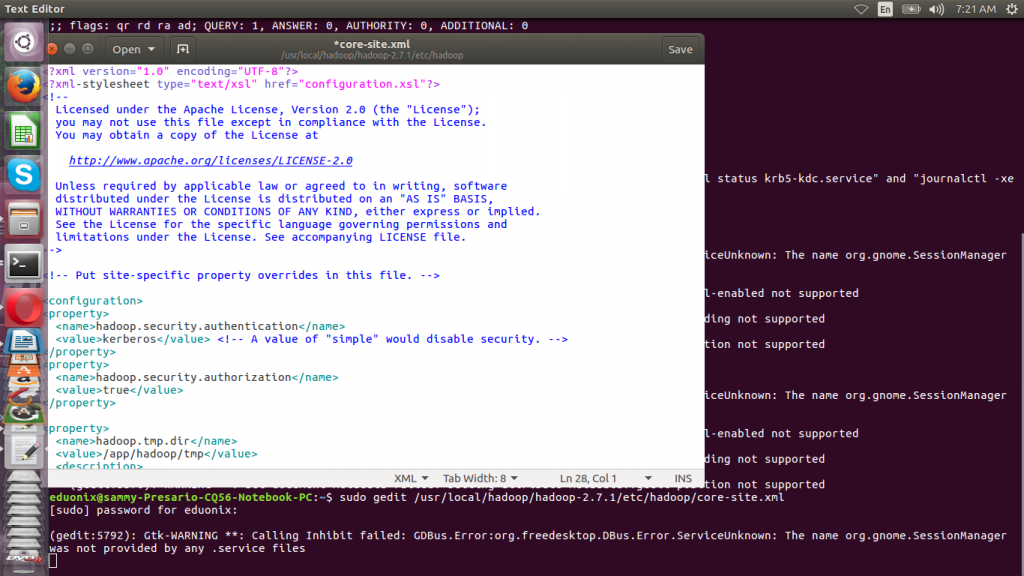

The last thing to do is edit hadoop core-site.xml file to enable kerberos authentication. This has to be done on all the nodes in the cluster.

sudo gedit /usr/local/hadoop/hadoop-2.7.1/etc/hadoop/core-site.xml

<property> <name>hadoop.security.authentication</name> <value>kerberos</value> <!-- A value of "simple" would disable security. --> </property> <property> <name>hadoop.security.authorization</name> <value>true</value> </property>

At the beginning of the tutorial we successfully created a directory without any problems. Any attempt to create directory after implementing kerberos will be refused. In part two of this tutorial we will demonstrate how to request tickets and authenticate.

hadoop fs -mkdir /usr/local/kerberos2

![]()

This tutorial introduced you to kerberos as a way of adding security to your Hadoop cluster. Basic kerberos concepts were discussed. Installation of client and server components and their configuration was discussed. Configuring SSH and Hadoop to use kerberos was also discussed.

[…] https://blog.eduonix.com/bigdata-and-hadoop/learn-secure-hadoop-cluster-using-kerberos-part-1/ […]

As per my understanding , we cant configure kerberos on single node cluster

Nice one Radhika

Following exact steps as mentioned above, when i am doing service start, it says unit not found. Am i missing a step?

Exactly same problem, got the solution?