Introduction

Hadoop is well known for its data processing capability for searching and sorting and can also be used for batch processing analysis. In order to use more features of this powerful tool, we need to make some customizations on this platform. This article is a solution for those who need to pass multiple files as an argument in the same input, whereas using Hadoop to incorporate many other developers’ needs and unleashing data science feature for better predictive analysis. These incorporating parameters will help in accelerating today’s business needs and demands.

Need for passing multiple arguments for a map reduce job

Many developers and data scientists need to critically analyse how this framework can be used for data processing in a much efficient way. Though Hadoop is known for analysing huge files, for that need to divide those huge and complex data sets and store them in Hadoop distributed file system. In some cases, we might need to use Hadoop to analyse multiple domains’ data (for example, when it comes to healthcare within the insurance sector). Healthcare data needs to be analysed by insurance companies in order to set-up insurance plans for different patients. For this, it is highly recommended to provide the correct parameters. There is a high chance of one domain being dependent on another similar to e-commerce shopping sites using sentimental analysis for making decisions for their users that are buying products on their website.

Why passing single argument is not sufficient

Let’s use a scenario of when passing a single argument is not sufficient for managing data analysis. Many times data analysts face problems while making changes to existing log file, trying to make it efficient enough produce better results.

X Company is using data solutions in order to check if their organization is functioning appropriately. It needs to analyse its records on regular basis in order to mark out all the anomalies that are causing problems to the organization and provide solutions. Y Company is an organization that is providing a firewall based solution and for that it needs to analyse network traffic to monitor packets and maintain the records of the packets that are moving in and out of their firewall. Big data analysis has the capability of analysing these complex data structures with an opportunity to collaborate with multiple domains. This way if X and Y will be sharing the data for analysis purposes (with permissible limit of access and under some constraints) then they can easily collaborate and manage usability for the purpose to be solved.

How efficient is passing multiple arguments as compared to single argument passing

Sometimes there are cases where we do not need to pass multiple arguments for a single command, that is why we need to rethink where this method is suitable and where to exactly use it.

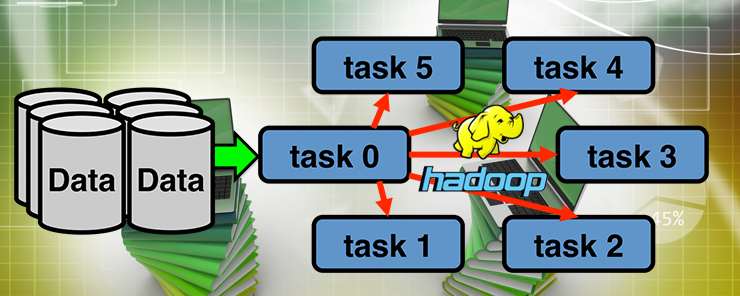

Passing multiple files to a map reduce job

Let’s take an example where a user has to analyse 4 files namely a/part000.gz, b/part000.gz and so on (it can be 4 or more than 100 files that we want to execute). Now the task is to execute these file and feed it into a single map reduce job. The thing is that if we want to execute these multiple files for a single map reduce job, then it needs to be stored in a single directory and we need to implement a java interface known as MultipleFileInputFormat. Though this is not a feasible solution for such kind of problems, where we need to pass the data from multiple domains, and it is totally impossible for us to collaborate these files into a single name space. Some of them prefer to merge these small files into a single name space and for this reason Hadoop does not always provide good results.

Solution 1: For solving this, we can use FileInputFormat.addInputPaths() method, that can take a comma separated list of multiple inputs and we can write it as

FileInputFormat.addInputPaths(“user1/file0.gz,user2.file.gz…………”)

This is the basic and frequently used approach for managing multiple inputs for a single map reduce job.

Solution 2: Implementing java codes for multiple file formats

In most cases editing the java code is the best solution for code solving problems. Here, we are also trying to pass multiple file to a map reduce job (files from multiple domains). For this we can simply edit a java code and add few lines into it for multiple inputs to work.

Suppose 2 files need to be analysed and a list of the people that are using the services of Hortonworks and cloudera (need a single output file out of these)

We have two files cloudera.txt and hortonworks.txt

The main method of map reduce program must be appended with the following code

Path HdpPath = new Path(args[0]); Path ClouderaPath = new Path(args[1]); Path outputPath = new Path(args[2]); MultipleInputs.addInputPath(job, ClouderaPath, TextInputFormat.class, JoinclouderaMapper.class); MultipleInputs.addInputPath(job, HdpPath, TextInputFormat.class, HdpMapper.class); FileOutputFormat.setOutputPath(job, outputPath);

Adding following lines to the code will yield multiple files to be passed within a single map reduce job.

Now running the following command will ask us for the multiple files and we can easily provide file locations for analysing multiple files.

hadoop jar analysis.jar org.myorg.Capital /user/cloudera/capital/input/Hdp.txt /user/cloudera/capital/input/cloudera.txt /user/cloudera/capital/output

Conclusion

In this article, we have discussed some of the many powerful features of Apache Hadoop. We have also covered how equivalently increasing the parameter of passing more than one input while running a map reduce job and for that purpose we have produced an implementation of managing the multiple inputs while running a job. We have also analysed in this article the pros and cons of using such a system and why it is actually needed. There might be a case when Hadoop will have some future enhancements and will make the tasks lot easier in order to have multiple domains collaborate and analyse the data.