Image recognition is a machine learning method and is designed to resemble the way a human brain functions. With this unique method, the computers are made to recognize the visual elements within an image. Relying on large databases and by visualizing emerging patterns, the target computers can make sense of images in addition to formulating relevant tags and categories.

While it is easy for man and animal brains to recognize objects, the computers have difficulty accomplishing the same task. When one looks at something say, like a tree, car or a time-consuming scenario, one usually doesn’t have to study it consciously before one can tell what it is. On the other hand, for a computer, identifying anything (be it a clock, or a chair, man or animal) often involves a very difficult problem and the consequent stakes in finding a solution to that concerned problem are very high.

What is Image Recognition? What are its usages?

In the context of machine vision, image recognition is regarded as the capability of a software to identify certain people, places, objects, actions and writing in images. To achieve this image recognition, the computers often utilize machine vision technologies in combination with artificial intelligence software supported by a camera.

Structure of A Convolutional Neural Network:

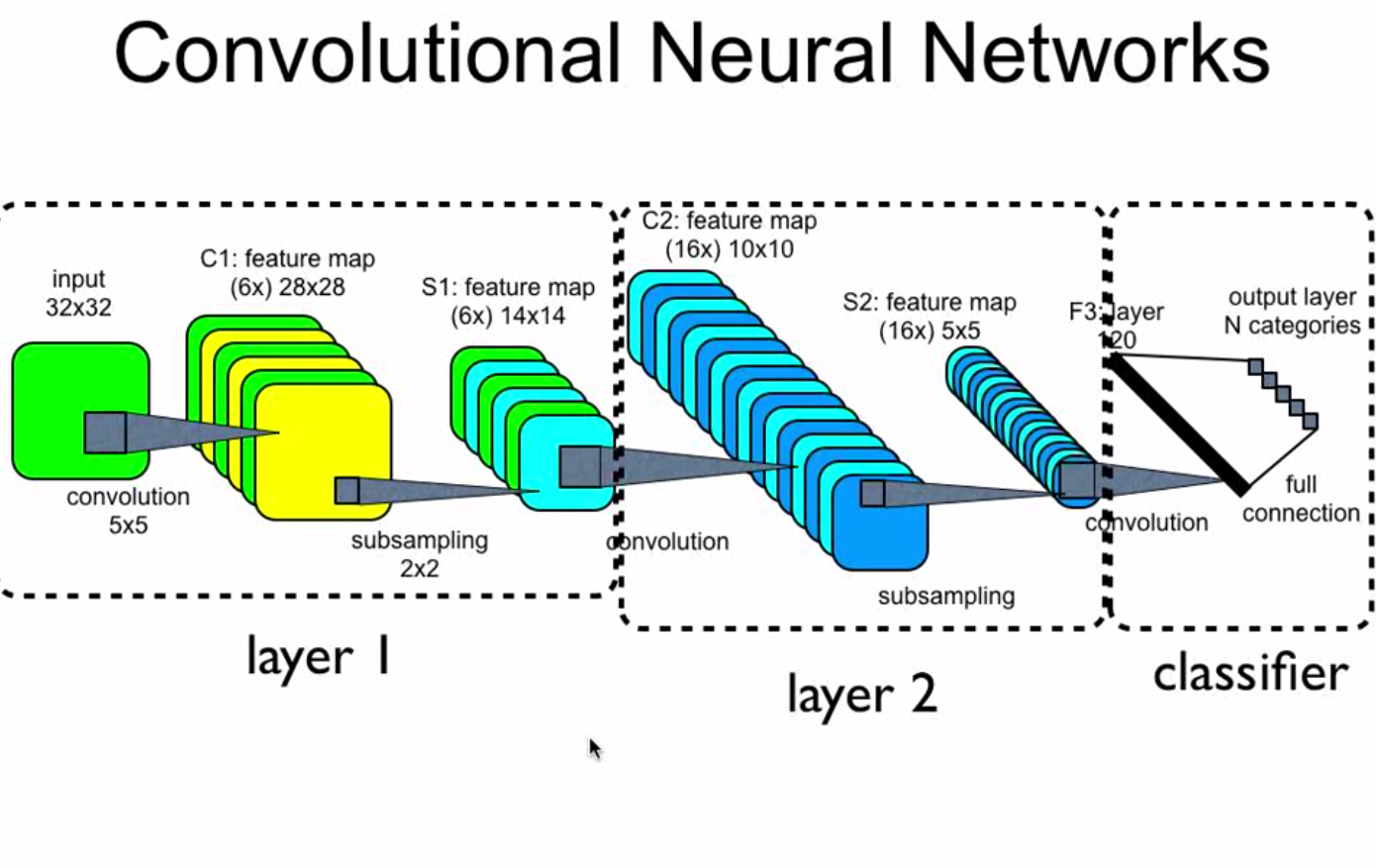

The way a neural network is structured, a relatively direct change can make even the huge images more manageable. The resultant is what we call Convolutional Neural Networks the CNN’s or ConvNets.

The applicability of neural networks is one of its advantages, but this advantage often turns into a liability when dealing with certain images. The Convolutional Neural Networks are known to make a very conscious tradeoff i.e. if a network is carefully designed for specifically handling the images, then some general abilities have to face the sacrifice for generating a much more feasible solution.

If an image is considered, then proximity has relation with similarity in it and convolutional neural networks are known to specifically take advantage of this fact. This implies that in a given image when two pixels are nearer to each other, then they are more likely to be related other than the two pixels that are quite apart from each other. Although, in a usual neural network, every pixel is very much linked to every single neuron. The addition of computational load makes the network much less accurate in this case.

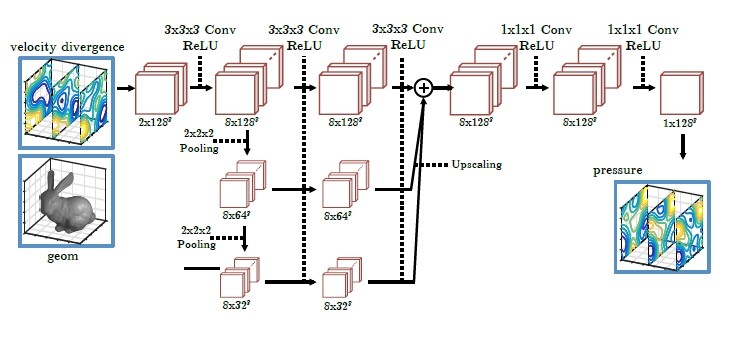

By killing a lot of the less significant connections, convolution tries to solve this problem. Technically, convolutional neural networks make the image processing computationally manageable through the filtering of connections by the proximity. In a given layer, apart from linking every input to every neuron, convolutional neural networks aim to restrict the connections intentionally that any neuron accepts the inputs only and that too from a small subsection of the layer before it (like 5*5 or 3*3 pixels). Therefore, each neuron is responsible for processing only a certain portion of the image. Coincidentally, this is exactly how the individual cortical neurons function in our brain where each neuron responds positively to only a small portion of our complete visual field.

How to use Convolutional Networks for image processing:

1. The real input image is scanned for features. The filter passes over the light rectangle

2. The Activation maps are then arranged in a stack on the top of one another, one for each filter used. The larger rectangle to be down sampled is usually 1 patch

3. The activation maps are condensed via down sampling

4. A new group of activation maps generated by passing the filters over the stack is created and is down sampled first

5. The second down sampling follows which is used to condense the second group of activation maps

6. A fully connected layer develops that designates output with 1 label per node

Filtration by Convolutional Neural Networks Using Proximity:

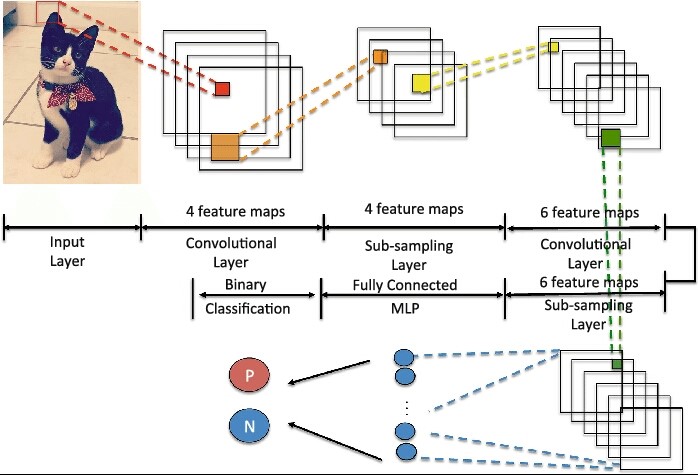

The secret behind the above lies in the addition of two new kinds of layers i.e. pooling and convolutional layer. Let’s break down the process by utilizing the example of a new network that is designed to do a certain thing – determining whether a picture contains a ‘friend’ or not.

1. The first step in the process is the convolution layer which contains several in-built steps

2. First, let’s break down friend’s picture into a series of overlapping 3*3 pixel tiles.

3. After that, run each of these tiles through a single-layer neural network, keeping the weights unaltered, in turn, will change the collection of tiles into an array. As we keep each of the images small (3*3 in this case), the neural network required to process them stays quite manageable and small.

4. Then, the output values are taken and arranged in an array numerically representing each area’s content in the photograph, with the axes representing color, width and height channels. So, for each tile, one would have a 3*3*3 representation in this case.

5. The next step is the pooling layer. It takes 4-dimensional arrays and applies a down sampling function together with spatial dimensions. The resultant is a pooled array that contains only the image portions which are important while it clearly discards the rest, and, in turn, minimizes the computations that are needed to be done in addition to avoiding the overfitting problem.

6. The down-sampled array is then taken and utilized as the regular fully connected neural network’s input. Since the input’s size is reduced dramatically using pooling and convolution, one must now possess something that a normal network will be able to handle easily while still preserving the most secured and significant portions of data. The final output represents and determines how confident the system is about having a picture of a friend.

Applications of Image Recognition:

Image recognition has many applications. The most common as well as the most popular among them is the personal photo organization. One would definitely like to manage a huge library of photo memories based on different scenarios and to add to it, mesmerizing visual topics, ranging from particular objects to wide landscapes are always present.

The user experience of the photo organization applications is often empowered by image recognition. In addition to providing a photo storage, the apps always go a step further by providing people with much better discovery and terrific search functions. One attains these with the capabilities of automated image organization provided by a proper machine learning. The image recognition application programming interface which is incinerated in the applications efficiently classifying the images based on identified patterns thereby grouping them quite systematically as well as thematically.

Other applications of image recognition include stock photography in addition to video websites, interactive marketing, creative campaigns, face and image recognition on social networks and efficient image classification for websites storing huge visual databases.

Conclusion:

In daily life, the process of working of a Convolutional Neural Network (CNN) is often convoluted involving a number of hidden, pooling and convolutional layers. In addition to this, tunnel CNN generally involves hundreds or thousands of labels and not just a single label.

Building a CNN from a single scratch can be an expensive and time-consuming task. Having said that, a number of APIs have been recently developed that aim to enable the concerned organizations to glean effective insights without the need of an ‘in-house’ machine learning or per say, a computer vision expertise that are making the task much more feasible.