A Markov model is a stochastic (that is, approach to anything that is based on probability) method for randomly changing systems which assumes that to predict the state of a system at time ‘t’, we need to know the state of the system at time ‘t-1’ only. These models show all possible states as well as the transitions, rate of transitions and probabilities between them.

In simple words (Markov Model):

We can say that Markov Model can tell us the next state, that is, state at time ‘t’ based only on the recent previous state, that is, state at time ‘t-1’. The following are some of the implementations/variations of Markov Models:

1) Markov chain – Used by systems that are autonomous and have fully observable states.

2) Hidden Markov model – Used by systems that are autonomous and where the state is partially observable.

3) Markov decision processes – Used by controlled systems with a fully observable state.

4) Partially observable Markov decision processes – Used by controlled systems where the state is partially observable.

We will not go in the details of the types of Markov models. Rather, we will try to learn a basic “Markov Model” having observable states.

Markov assumption can be written as:

P(eventt | eventt-1, eventt-2, .., event1) = P(eventt|eventt-1)

Model

For a very basic Markov Model, all the states will be observable, that is, there will be no hidden states. States represent the various cases, or say, instances, that we are going to consider. For example, if we talk about “weather”, the possible states can be ‘Rainy’ or ‘Sunny’, or if we talk about grocery stores, the various states can be the different nearest stores, etc.

In the first example, we will see in detail how actually a Markov Model predicts the next day’s weather.

The change in state from one to another is known as Transition. The transition from one state to another is represented by the corresponding probability, known as transition probability.

The transitions are basically visualised via a directed graph and the edges in the graph have the probabilities of transition from one state to other.

For every Markov Model, firstly, we draw a matrix of transition probabilities that show the probability of occurrence of a state after a particular state. And from the definition of Markov Model, we know that to predict tomorrow’s state (that is, next state) we must have today’s state (that is, current state).

Hence, for better understanding, we can write:

[Next State] = [Matrix of Transition Probabilities] * [Current State]

X1 = PX0

*X1, P, X0 all are matrices.

Weather Prediction

Consider the matrix of transition probabilities below.

|

CURRENT STATE |

NEXT STATE |

|

|

RAINY |

SUNNY |

|

|

START |

0.7 |

0.3 |

|

RAINY |

0.4 |

0.6 |

|

SUNNY |

0.3 |

0.7 |

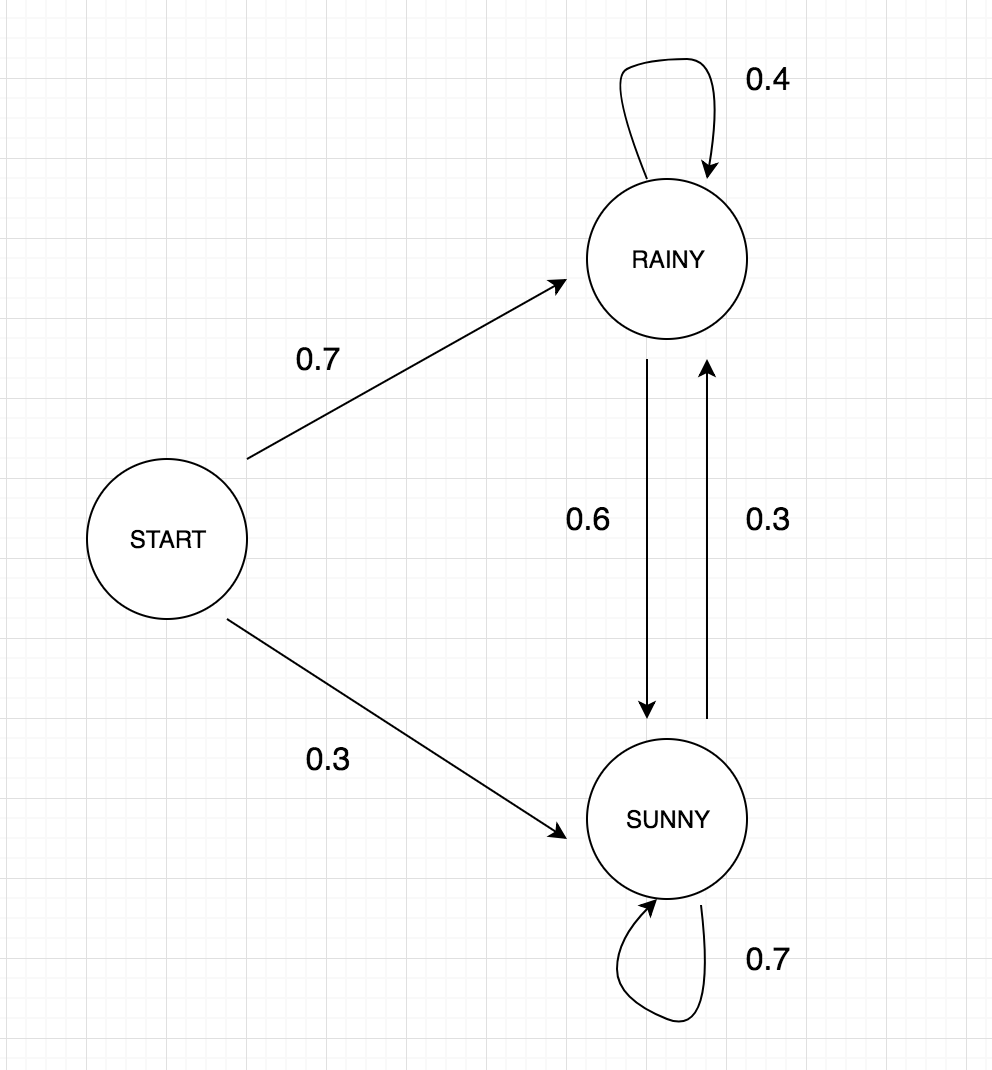

Now, let us construct a directed graph on the basis of above table which will show some of the transitions of states.

Now, let us try to understand the above graph prepared from the ‘Matrix of Transition Probabilities’:

*NOTE:

We may omit start and we can start with either ‘Rainy’ or ‘Sunny’ directly because mostly, the transition matrix is considered to be square matrix but for good understanding, I have used ‘Start’ state. However, in the code, we will omit the start state.

The start state represents the starting point and the values.

P(rainy|start) = 0.7

P(sunny|start) = 0.3

P(rainy|start) and P(sunny|start) represent the probabilities that we have generalised from the previous states (that is, states before start) or say, from the previous study of a place.

Now, if we know that today is a ‘RAINY’ day and we want to predict for tomorrow, then we can directly predict from the above graph.

P(rainy|start, rainy) = 0.4 * 0.7 = 0.28

Here, 0.7 is the probability of a day being ‘rainy’ if we have started from the ‘start’ state and 0.4 is probability of a day being ‘rainy’ if the previous day was ‘rainy’.

Example

Question

What is the probability of getting the weather sequence as “RAINY RAINY RAINY SUNNY”?

Answer

P(rainy|start) = 0.7; eq—1

eq—1 gives the probability of 1st day to be ‘RAINY’

P(rainy|start, rainy) = 0.7 * 0.4 = 0.28 eq—2

eq—2 gives the probability of 2nd day to be ‘RAINY’ given 1st day was rainy.

P(rainy|start, rainy, rainy) = 0.28 * 0.4 = 0.112 eq—3

eq—3 gives the probability of 3rd day to be ‘RAINY’ given 2nd day was rainy.

P(sunny|start, rainy, rainy, rainy) = 0.112 * 0.6 = 0.0672 eq—4

eq—4 gives the probability of 4th day to be ‘SUNNY’ given 3rd day was rainy.

So,according to Markov Model we can get the sequence as “RAINY RAINY RAINY SUNNY” with probability 0.0672.

Code

Now, let’s move on to the code.

We will take the same problem as above, that is, weather prediction which do not have any start state.

Firstly we have to define:

i) Our States.

ii) Transitions.

iii) Transition probabilities.

iv) Weather of starting day.

iv) Number of days to predict.

*All the initialisations are mentioned and all the steps are well explained with the help of comments in the code.

# import libraries

import numpy as np

import random as rm

# Possible states in transition matrix.

states = ["RAINY", "SUNNY"]

# Transitions (R->RAINY, S->SUNNY)

transitions = [["RR","RS"],["SR","SS"]]

# Probabilities matrix (transition matrix)

transitionMatrix = [[0.4, 0.6],[0.3, 0.7]]

# check if probability sum up to 1 or not.

if sum(transitionMatrix[0])+sum(transitionMatrix[1])!= 2:

print("The Probabilities of transition matrix are not correct, every row should sum to 1")

# Actual function that implements Markov Model for weather forecast

def weather_forecast(days):

# Fix the weather for starting day

weatherToday = "RAINY"

print("First day: " + weatherToday)

# Will store the weather for next 4 days. So, this will have only starting day weather for now.

forecastList = [weatherToday]

i = 0

# To calculate the probability of the forecastList

prob = 1

while i != days:

if weatherToday == "RAINY":

random = np.random.choice(transitions[0],replace=True,p=transitionMatrix[0])

if random == "RR":

prob = prob * 0.4

forecastList.append("RAINY")

pass

elif random == "RS":

prob = prob * 0.6

weatherToday = "SUNNY"

forecastList.append("SUNNY")

elif weatherToday == "SUNNY":

random = np.random.choice(transitions[1],replace=True,p=transitionMatrix[1])

if random == "SR":

prob = prob * 0.3

weatherToday = "SUNNY"

forecastList.append("RAINY")

pass

elif random == "SS":

prob = prob * 0.7

forecastList.append("SUNNY")

i += 1

print("Weather forecast: " + str(forecastList))

print("Probability of getting the above weather forecast starting with : "+forecastList[0] + str(prob))

# Call the method that forecasts the weather for next 4 days

weather_forecast(4)