Do you remember what the definition of Shell Scripts is?!

I told you in our first article in this series that a Shell Script is a collection of Linux commands, stored in an ordinary text file, and executed in sequence. So, shell scripts merely consist of commands. Sometimes, a command or a combination of several commands (output from one command is piped as the input to another) could achieve important results. Such combinations are usually as short as fitting in one line, but as useful as long scripts (light in weight and precious in value, as the Arabian saying tells).

Some references call them One-Liners. So, say Hello to our today’s guest: One-Liners.

Sorting Directories by Disk Usage

You may come to a situation that one of the file systems on your server is running out of free space. In this case, you need to identify the root cause for this problem: which directories have the highest disk usage. The following line has the solution:

du –m FILESYSTEM | sort –n

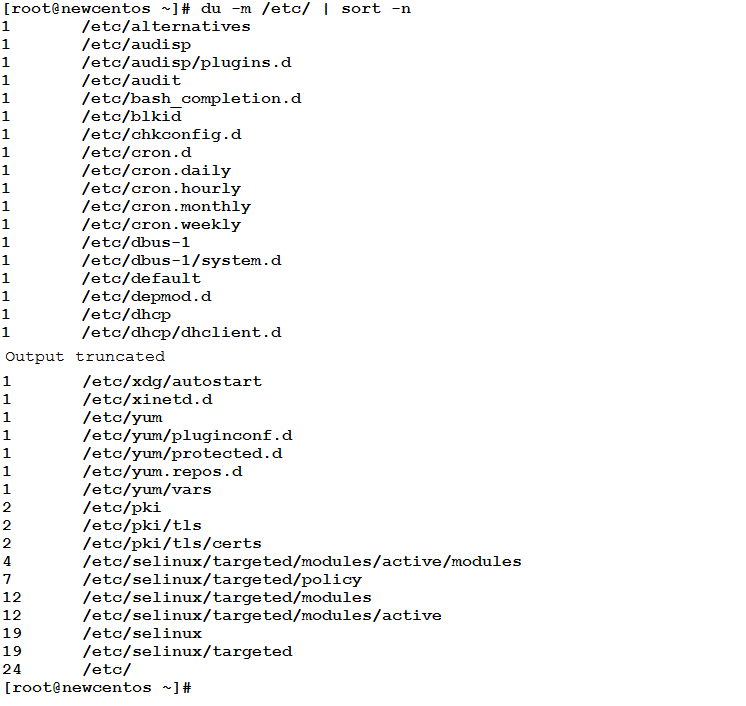

Where FILESYSTEM is the name of the file system which is running out of space. To see how this composite statement will behave, let see what we will get when using it with the /etc file system:

As you see, the total disk utilization for each sub-directory under /etc is displayed, all sorted numerically in ascending order. The du command output is not sorted, that is why it is piped as input to the sort command that performs the sorting operation (-n : numeric sorting)

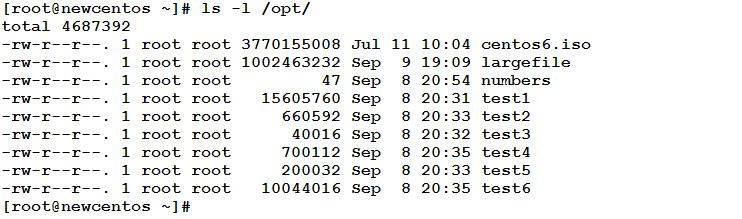

Sorting Files in a Directory by Size

The /var file system is full, and you have used the previous one-liner (du –m /var | sort –n) and found that /var/spool/mail is utilizing much space. This directory contains the mail spool files for the individual user accounts on the system. So, how would you identify the spool file having the largest size?

– Very easy!! Just look at the long listing of the directory contents, and you will easily identify the big files!!

Okay, that is a solution. But, what if your system has 200 user accounts (and 200 mail spool files accordingly)?! What if it has 5000 user accounts?! Could it be an easy task to look at 5000 files and identify the biggest ones?! Could it be done by eyes?! Of course, No!!

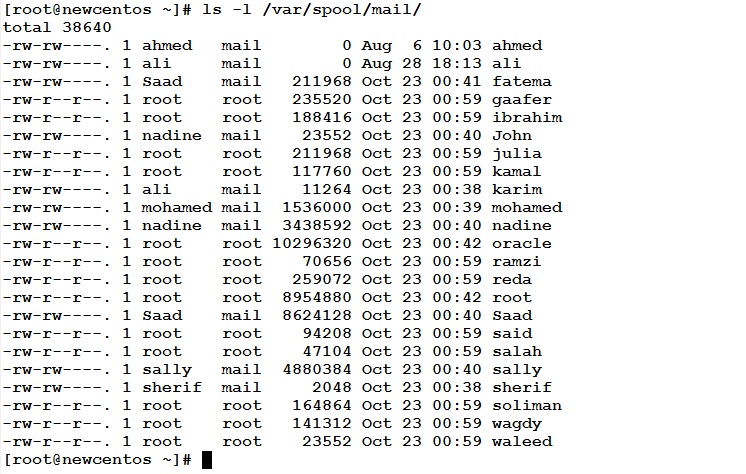

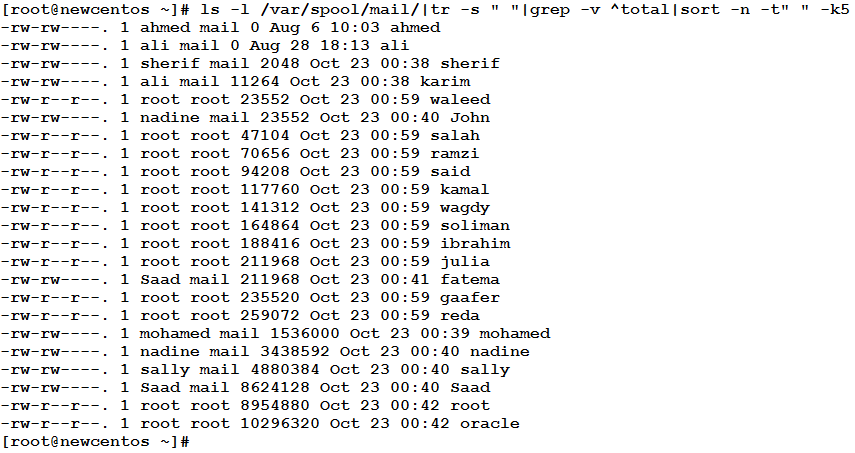

The solution is to sort the files according to their sizes. To do, the same command sort is used to sort the long listing of the directory contents. Consider the contents of the /var/spool/mail directory is as follows. How could we sort this output by file size?

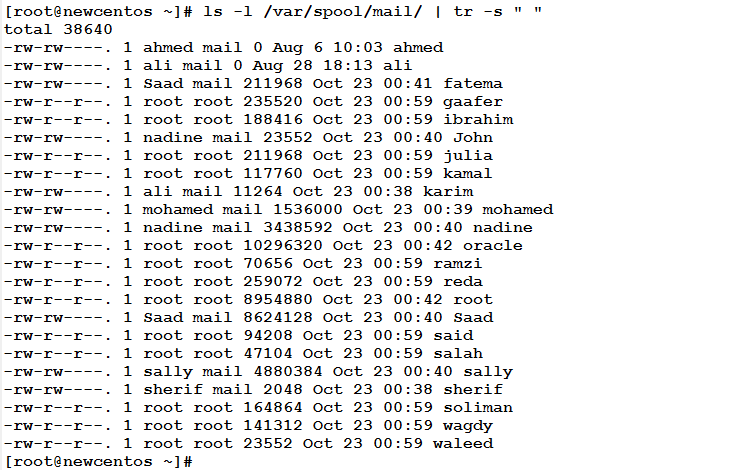

In the long listing of any UNIX/Linux file/directory, the file size(s) is/are listed in the fifth field. So, we need to sort the above output by the fifth field. Before we do this, we need first to adjust the field delimiters to be just one space instead of multiple white spaces. The tr command will take care of this intermediate operation:

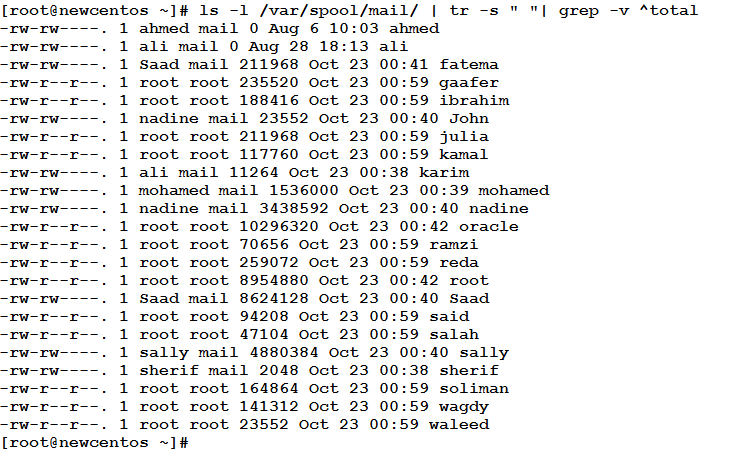

Next, we need to discard the line starting with total:

Now, we are ready for the sorting operation:

Now, we could easily identify that oracle, root, and Saad are the user accounts having the largest mail spool file, so we can go ahead and empty these files to save some space. Congratulations!!

Deleting Files Older than x Days

A newcomer employee in your team told you that /tmp on one of the servers is full, and asked what he should do. /tmp (for those who don’t know) is a file system used to store temporary files.

– OK, so what?! Temp files!! I can go ahead and execure rm –rf /tmp/*

Wait!! This won’t be a problem for temp files written and no longer accessed. But, what about temporary files being used now by running processes?!

The solution is to locate and delete files under /tmp that are older than say 15 days. If this turns out to be insufficient, go ahead and delete temp files older than 7 days. The one-liner that will achieve this task is as follows:

du –m FILESYSTEM | sort –n

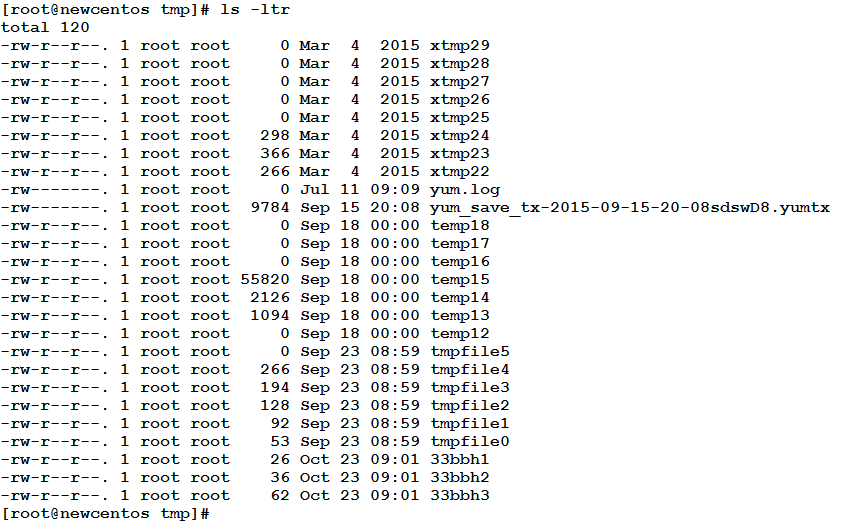

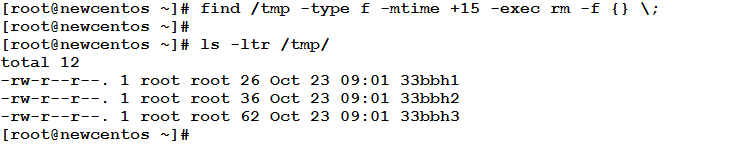

Where the number following the + sign is the number of days to delete files older than it. Assume today is 23rd October 2015, and the long listing of /tmp looks like the following:

We have files from March, July, and about 35 days ago. Why keep all of this?! If we execute the above command, we should get the following:

Mission cleared!!!

Locating Files of Specific Day

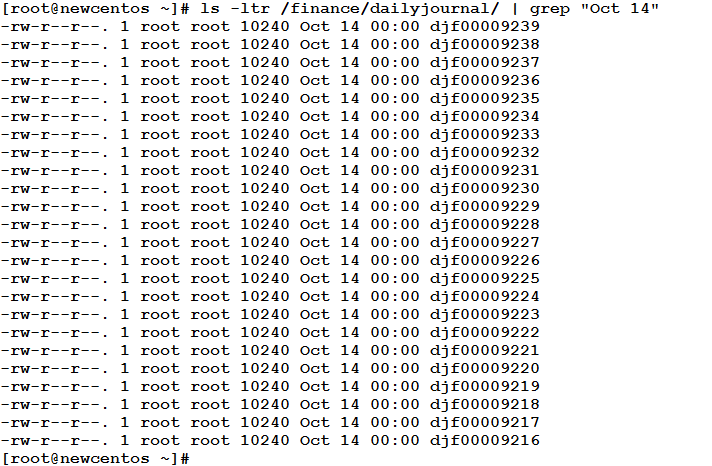

Now, after a serious problem, the audit department in your firm asked you to send them the daily journal files for the day just before the problem (say the problem was on 15 October).

– OK, I will go ahead and list the files, and from the long listing I could easily identify files created on that day.

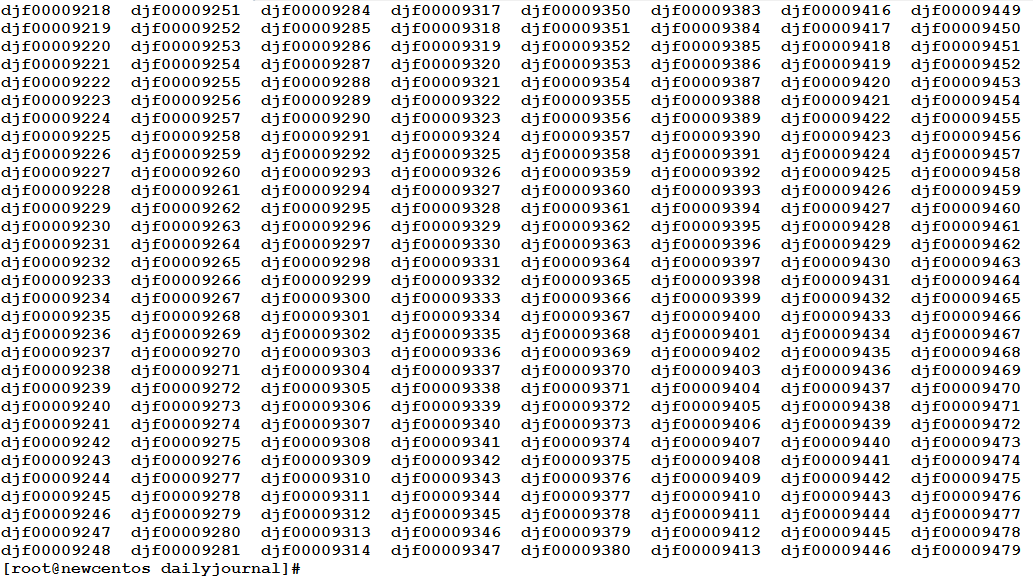

Are you serious?! Have a look then on “part” of the daily journal directory contents.

– Ooops!!

Sorry if I had shocked you, but in fact what you see are just log files of one week!! And the filenames don’t contain anything that refers to the date; their names are just a serial!!! But, there is a good trick. Look at the format of the long listing of any directory:

Haven’t you noticed anything?! Huh!! Exactly!!! The date!! The date the file was last modified!! This is the key!! Now, we could filter the long listing of the directory as follows:

Very simple trick solved the problem!! Using the grep command to filter the output for the date in this way “Oct 14”, “Jan 1”, “Feb 12”, “May 23”, etc.

After identifying the files, we need to send them to the audit department. We need first to copy them into another directory, and then copy the entire directory using either scp, or filezilla or something.

– Do we have to do this manually for each file?! Where are your one-liners?!

Here, and they have a solution for this problem also!! This solution will be the first topic to start the next article with: Effective One-Liners (2)

So, don’t miss it!!