The traditional approach of deploying applications was using a package manager for application installation, but it also suffered from the problem of mixing up executable files, configuration files and libraries of the application and those of the host. Although virtual machines could be used to ensure predictability they suffer from performance and portability issues.

To resolve problems faced with the traditional approach, containers were developed that enable virtualization at the operating system level rather than hardware-level virtualization. The characteristics of containers are that containers are separate from each other and they are separate from the host operating system. To clarify this level of separation, containers have independent file systems, they can be assigned specific resources and their processes are not visible to processes of other containers. The advantages of containers over virtual machines are portability across different environments and simplicity of building them because they are not tied to infrastructure and host operating systems.

Because of their relatively small size, footprint size and speed, an application can be packaged in a single image resulting in a one to one relationship between applications and images. The one to one relationship results in the flexibility of container image creation at build instead of deployment time because an application is separate from the target deployment environment. This enables consistency between the development and deployment environments.

When using containers you reap benefits such as:

- By allocating containers’ specific resources, you can predict how your application will behave.

- Containers are highly portable and they can be run locally or in the cloud.

- You can use the agile approach to create and deploy containers

- You are able to use the DevOps approach. You are able to separate, build and development activities, which eliminates being tied to a specific infrastructure.

- There is consistency in application behavior across different environments. You focus on building your application instead of worrying about how it will behave in different environments.

- Applications are broken down into smaller units that are manageable.

- You are able to use continuous integration

Kubernetes is also known as K8s is a technology developed by Google to support the management of containerized applications that run across a cluster. Kubernetes was developed to bridge the gap between clustered infrastructure and environment requirements of applications.

Before adopting technology the key question to ask is what problems is the technology going to solve. Kubernetes solves the challenges listed below:

- Verifying identity, and setting up rules for the authorization of users

- Debugging your applications

- Logging application events, which help in debugging and monitoring the health of your cluster

- Monitoring resource use among the different components of a clustered

- Automatically scaling the resources needed

- Managing the allocation of files to containers

Although Kubernetes can be supported in multiple environments such as Google Compute Engine (GCE) and Amazon Web Services (AWS), this tutorial will focus on use GCE. Google provides a 60 day trial of GCE worth $300. To register for the trial, you will need to provide a valid card or bank account, but you will only be charged upon the expiry of the trial period and deciding to upgrade to a paid account. Visit the GCE website and register for a free account.

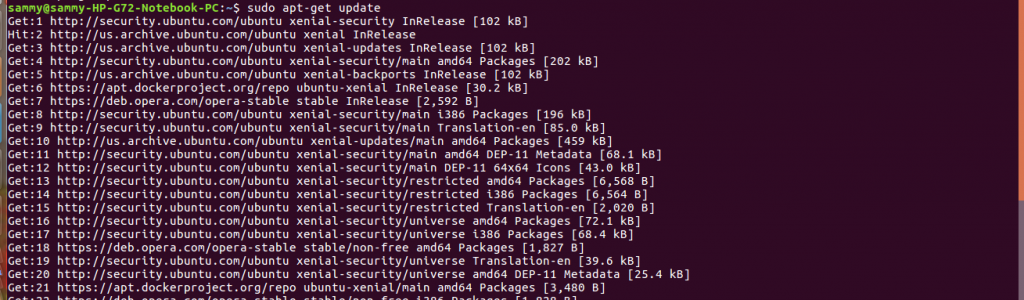

After signing up for the free account you need to make sure Python and Curl are installed. The commands below will install Python and Curl

sudo apt-get update

sudo apt-get install python

sudo apt-get install curl

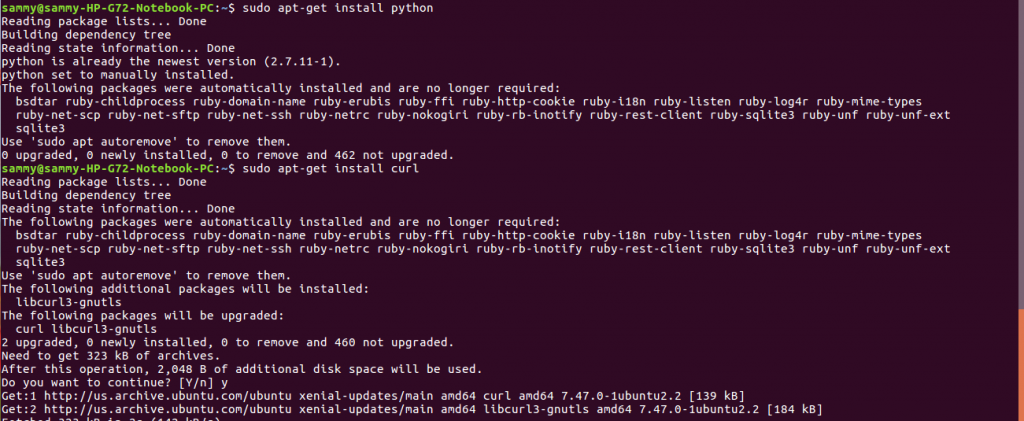

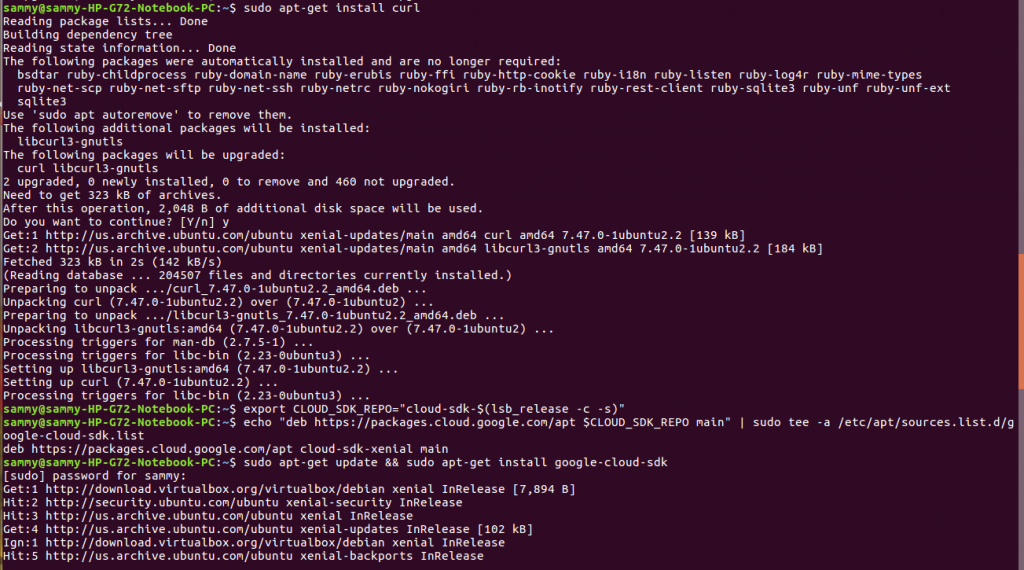

After installing Python and Curl, the next step is to install the gcloud SDK. We will install the Google cloud SDK which contains gcloud. There are several steps needed to install the SDK and they are listed below.

The first step is to create an environment variable which is done by using the command below:

export CLOUD_SDK_REPO="cloud-sdk-$(lsb_release -c -s)"

The second step is to add a package source for the SDK by using the command below:

echo "deb https://packages.cloud.google.com/apt $CLOUD_SDK_REPO main" | sudo tee -a /etc/apt/sources.list.d/google-cloud-sdk.list

The final step is to update the package source and install the SDK using the command below.

sudo apt-get update && sudo apt-get install google-cloud-sdk

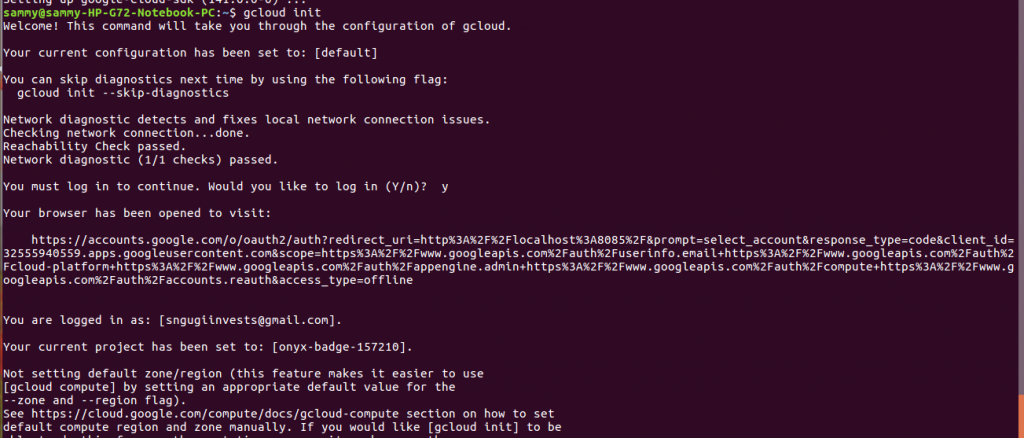

After the set up is complete, the SDK needs to be initialized before it can be used. Initialization enables the SDK to use your Google account and confirms the set up was correct. Initialization is done using this command

gcloud init

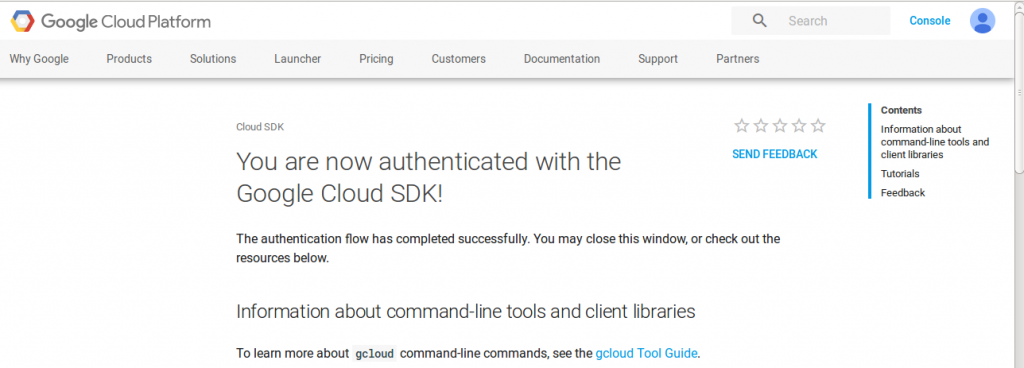

Once authentication is successful, a message will be displayed on your browser.

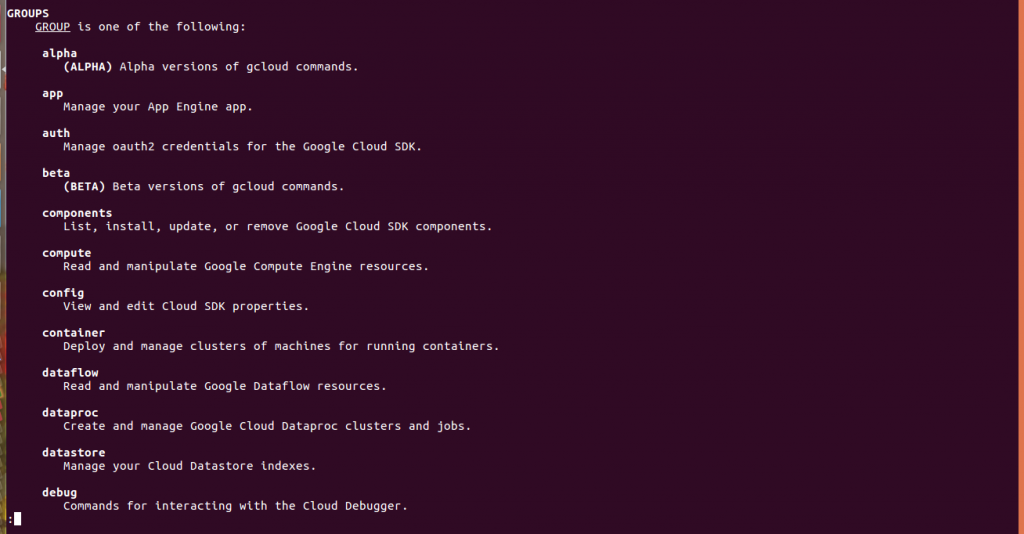

To display information about your SDK and commands that are available, run the commands below:

gcloud info gcloud help

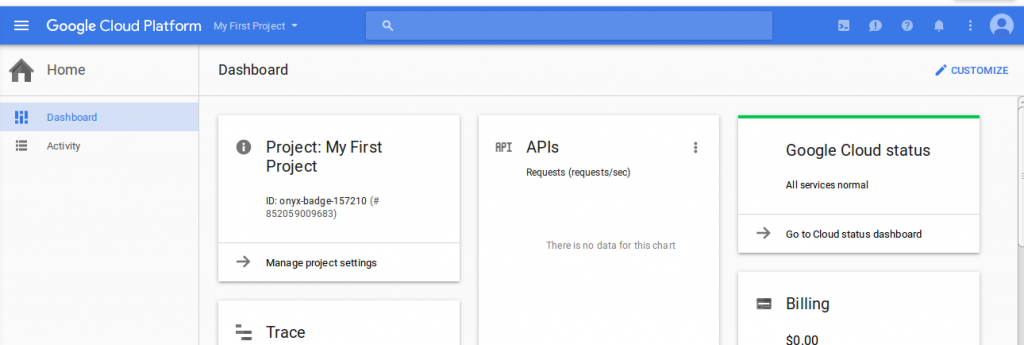

When the SDK is installed, a default project is created which can be changed later. To return a list of projects use this command

gcloud config list project

The default project can be changed at any time by passing the project ID as shown in the command below.

gcloud config set project [project id]

The project id can be found on your console dashboard, as shown in the screenshot below.

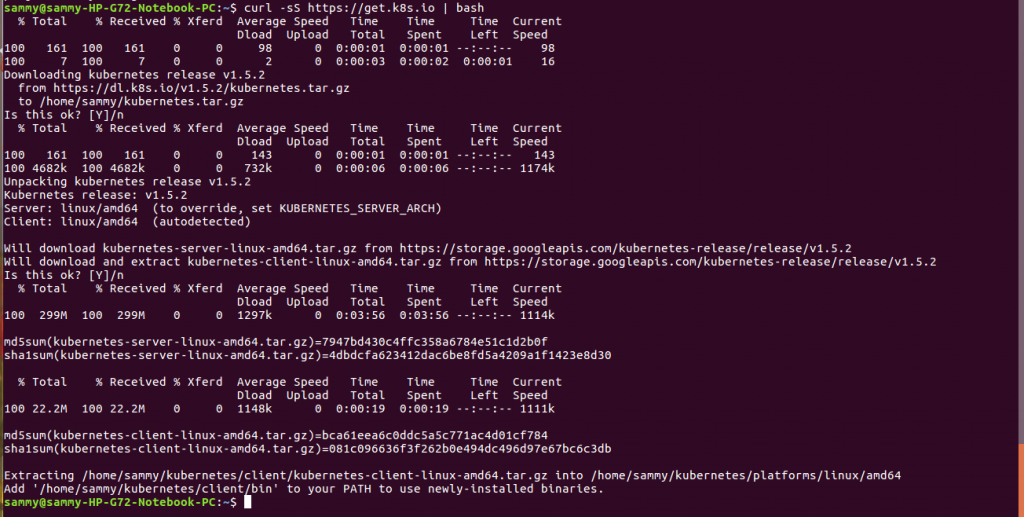

After installing Google Cloud SDK, the next step is installing Kubernetes. This is simple and it is achieved in a single step using the command below.

curl -sS https://get.k8s.io | bash

By default Kubernetes will use GCE.

In this tutorial, we introduced containers as an approach for deploying applications. We noted advantages of containers over virtual machines and discussed benefits of using containers. We introduced Kubernetes as a solution for container management. We demonstrated how to install Python, Curl, and Google cloud SDK. Finally, we demonstrated how to install Kubernetes. After reading this tutorial, you should know the usefulness of containers and Kubernetes.