Introduction

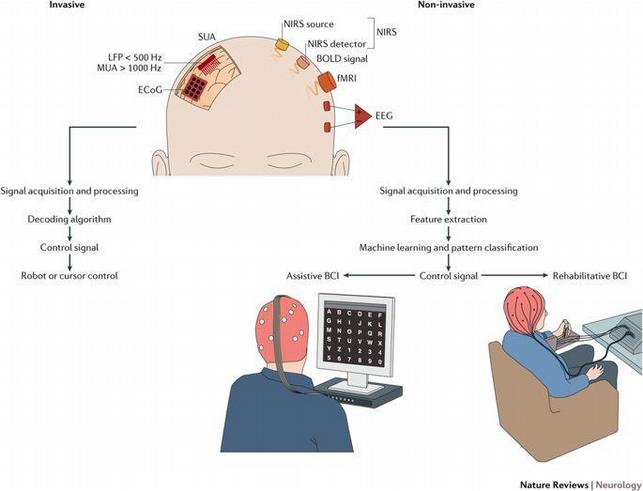

Brain-Computer Interface technology is a system that interacts with the living brain and computer system to simulate the computers in a way to use the signals produced in the brain. Various methods are available to implement the Brain-Computer Interface technology, such as

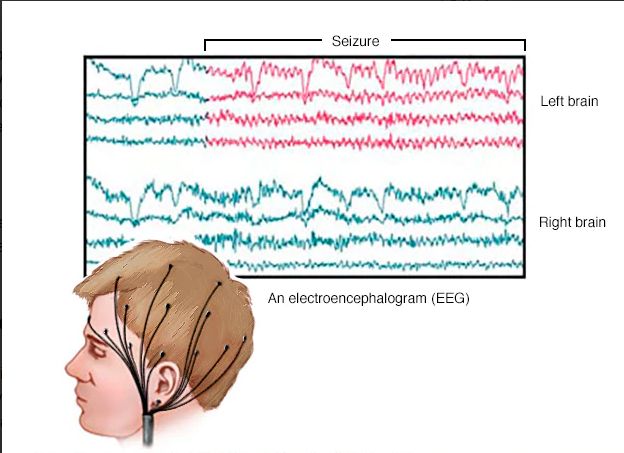

1. Electroencephalography (EEG) – It records the activity of electrical signals in the brain using electrodes which are placed on the scalp.

2. Functional Magnetic Resonance Imaging (fMRI) – It records the changes in the blood flow in the brain.

3. Functional Near-Infrared Spectroscopy (fNIRS) – It records the brain activity through the hemodynamic responses of the neurons.

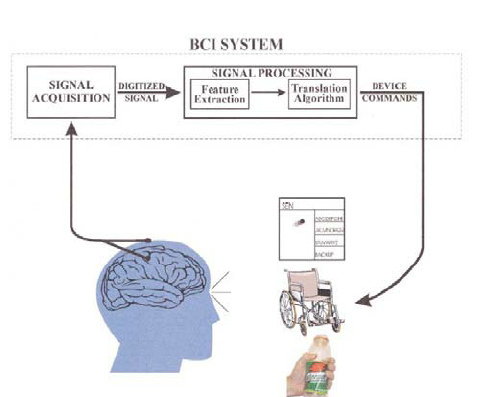

BCI can also be simplified in the following steps-

1. Production of signals

2. Detection of signals

3. Process and Output from the signals detected.

History of Brain-Computing Interface

Majorly in the 1970s, the idea of BCI was first coined. Research on BCI started at the University of California. The aim was to focus on neuroprosthetics which can help in the diagnosis of damaged sight, hearing and movement.

In the late 1990s, Phillip Kennedy discovered the device to read the signals but was not that precise. His patented device comprised of glass cones along with microelectrodes that coated with neurotrophic proteins. It was done in order to promote the binding of the electrodes to the extracellular matrix of the cerebral cortex.

Soon in 2004, Researchers demonstrated the use of non-invasive BCI and operated a computer using BCI. It was controlled by wearing caps that contained electrodes. It captured EEG from the cortex of the brain.

Since then BCI took a pace and it was used in controlling various devices like a cursor, robots, etc.

Recently, Elon Musk entered the industry with an investment of $27 million dollars with the aim to develop BCI in the light of Artificial Intelligence to improve human communication. Facebook also expressed their willingness to use Brain-Computer Interface technology in order to for interesting projects like high-speed typing using brain signals.

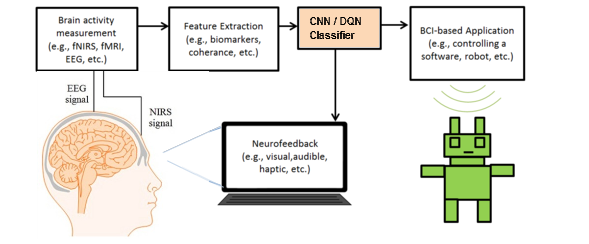

Structure of BCI

In controlling devices through BCI, a certain mechanism is required. There has to be a brain activity and that brain activity will be recorded via any of the above-mentioned methods like EEG, etc. Then we will study the output and mark the features that are responsible for movement or any dynamic behavior of the brain. After that comes the important part: using the features in the software, that is, converting it from analogous to computer signals. We use CNN/DQN classifier for that. It comes under the huge umbrella of Artificial Intelligence. We train our software through specific algorithms. Finally, an application is operated with the software. It can be a robotic arm, sensors, cursors, etc.

To learn more about AI, try the “Artificial Intelligence & Machine Learning” online tutorial. The course comes with 8 important lectures each covering an imperative topic.

And if you lack prior knowledge in AI and want to start from scratch, try the “Learn Artificial Intelligence Fundamentals for beginners” course. This tutorial accompanies one hour of video with 4 sections. This course will help you clear your fundamental concepts and it includes important topics like Fuzzy Logic Systems, Expert Systems, Challenges of Artificial Intelligence, Future of Artificial intelligence, Artificial Intelligence Issues for us and much more.

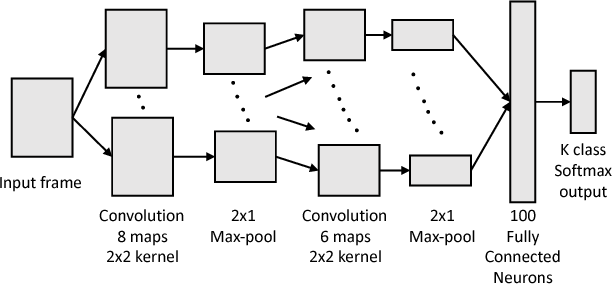

Why Use CNN in Brain-Computer Interface technology?

Convolutional Neural Network (CNN) belongs to a branch of deep neural networks. Its function is similar to the visual cortex and performs learning in the same manner, that is, it learns through inputs and manages its weight of inputs accordingly to reduce the classification error by front and backpropagation. Our brain has multiple neuron structure and the same way a CNN model has multiple neurons connected to each other. The advantage of CNN is a hierarchical pattern in data, which helps in processing the multiple layers and finally integrating the output in a single structure in the most optimized way.

CNN is often used in BCI technology to restore impaired vision and feeding other visual movements to the computer.

Datasets can be obtained using the fNIRS and EEG method and then we can process it in the desired manner to obtain the results.

Bidirectional View of Brain-Computer Interface technology

So far we have only discussed the controlling behavior of our brain to computers. According to researchers, it would be the most cognitive way of utilizing the power of BCI because, in neurology, it would make a great impact and various brain problems could be solved. Stimulating the nervous system is the key idea behind this.

This major aspect of the Brain-Computer Interface technology can be highly beneficial for a disabled person. Tasks, like moving a wheelchair or operating any particular device without manual handling can be done with the proper development of this technology.

How to Read EEG?

When electrodes are put on the brain to record changes in the electrical signals, EEG prints a graph of it during an experiment which is time-dependent and various graphs are present which shows the behavior of different parts. When a person is thinking specifically about something or performing a movement, changes occur in brain signals and are recorded and identified on the graph produced during the EEG.

Though these changes do not depict any unique behavior but a difference in a regular pattern of the graph is noticed. With in-depth studies on these differences, it was concluded that spikes in the graph and their heights do have some relation to our thought process. Certain spikes patterns can be observed for a certain period or certain activities.

Using these trends, features are extracted and then run through to the AI where it can be functionalized to real model applications.

Current Status of Brain-Computer Interface technology

At present, the BCI is confined to the laboratory, its operations are based on a body and gathering of data. Due to its nature of the invasion, some health problems were recorded like skin and infection in the skull. The non-invasive technique is in the development stage.

Various universities are studying BCI, the advancement of BCI in medical science would definitely be one of the most amazing achievements of mankind. Brain-computer interfaces (BCI), which is an essential part of Brain-Computer Interface technology, are increasingly becoming reliable pieces of technology, changing the lives of patients, particularly for those who suffer from paralysis or similar conditions.

Brain-Computer Interface technology in the Future

Because of errors in the extraction of features, we do not really find the BCI in its genuine form today. Normally outputs from the brain include a lot of noise and it does not provide us with the quality of data we require. One of the reasons for this problem is the use of noninvasive techniques, but obviously, safety is always the first priority for scientists and researchers. Another problem is the time dependency of the output because electrical signals tend to change every millisecond in a living body so it’s difficult to judge the output.

To reduce this, the feedback mechanism is also used in the CNN algorithm in order to train the data.

We can certainly hope that In the near future, Brain-Computer Interface technology would be much more optimized and we will come across its usages in real life.